Happy Saturday and welcome to Investing in AI. Be sure to check out the AI in NYC podcast. The latest episode on AI Governance with Alayna Kennedy is a fun one. We’ve also launched a new New York GPT bot that specializes in information about NYC so, check it out if you live here or plan to visit.

The AI bubble is often compared to the early days of the railroad or telecom industries to draw parallels between capital expenditures and eventual revenues from those investments. That comparison is misleading, because in railroads and telecom, the expense was incurred to connect things. Every new rail route required steel, labor, land rights, and years of construction. Telecom required trenching fiber across continents. Revenue scaled linearly with physical deployment — every new mile was expensive.

In AI, it’s the opposite. Developing our AI engines is expensive. Connecting things to our AI engines is cheap, and getting cheaper. A new data pipeline. A prompt template. An API integration. An MCP Server. You’re not digging trenches — you’re copying software. This means the capex-to-revenue curve should look fundamentally different from railroads or telecom. Those industries needed decades of physical buildout before revenue caught up. AI needs months.

We’ve been through this talk of economic infeasibility before. In 2009, McKinsey released a report called “Clearing the Air on Cloud Computing“ that painted cloud as over-hyped, argued AWS was 144% more expensive than running your own data center, and concluded that large enterprises shouldn’t bother. Cloud computing, they said, could lead to a “trough of disillusionment.” They were wrong — spectacularly wrong. Cloud is now a $600B+ market and the backbone of modern enterprise. The same pattern — too expensive, no path to profitability, over-hyped — is playing out with AI today. I think the critics are wrong again.

My argument: if there is a bubble, it is a small one, and it will have a soft pop. Here’s why.

1. We’ve Built the Engines. Now It’s Time to Connect Them.

We’ve invested hundreds of billions of dollars developing AI. Now is the time to connect it. And connecting AI to data and systems to perform useful tasks and generate revenue is cheap — dramatically cheaper than building the models themselves — and the cost is dropping every month.

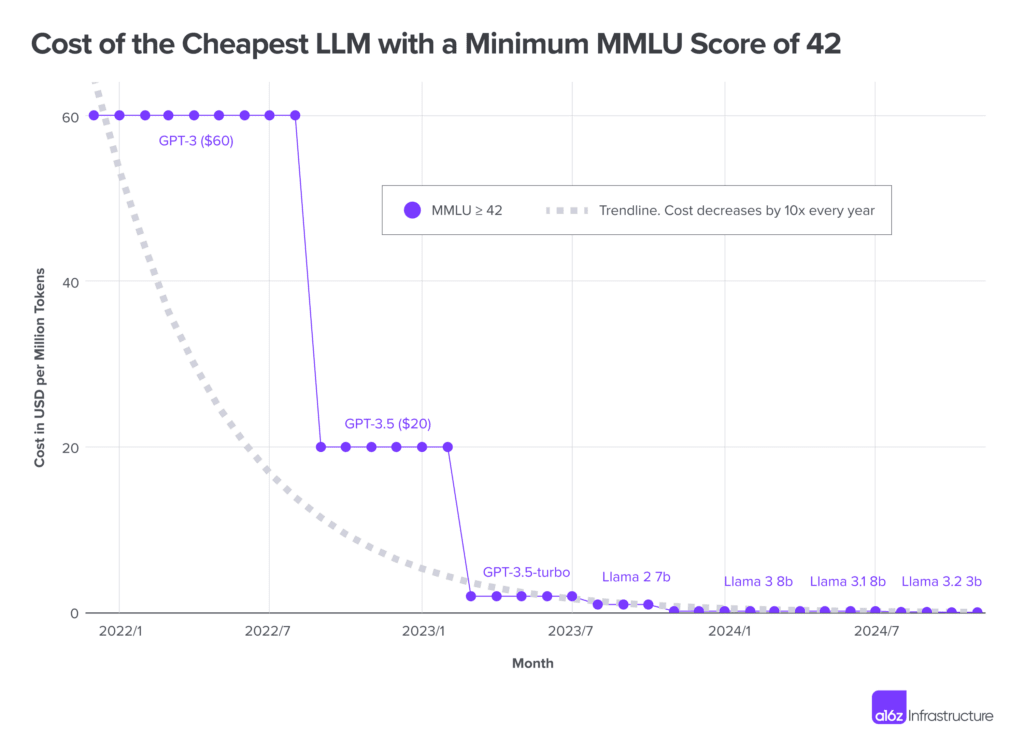

The numbers here are staggering. According to Epoch AI’s research, inference price declines range from 9x to 900x per year depending on the capability level, with the median rate jumping from 50x per year to 200x per year after January 2024. Stanford’s AI Index found that achieving GPT-3.5-level performance dropped from $20 per million tokens to $0.07 in just 18 months — a 280x decline. Andreessen Horowitz documented a roughly 10x-per-year cost decline for equivalent inference performance, a rate that outpaces both the PC compute revolution and the dotcom bandwidth explosion.

This is the part the bubble narrative misses entirely. The marginal cost of deploying AI into a new use case isn’t another $100M training run. It’s prompt engineering (nearly free), RAG to connect models to proprietary data (no retraining required), or light fine-tuning to adapt behavior for a specific workflow (a fraction of full training cost). The engines are built. Laying the track is cheap.

And companies are already doing it. According to Menlo Ventures’ 2025 report, enterprise generative AI spending tripled to $37 billion in 2025, with more than half — $19 billion — flowing to applications, not infrastructure. Adobe pulled in $125 million in stand-alone AI revenue in a single quarter, built on existing foundation models. Zendesk charges $1.50 per AI-resolved ticket. None of these required a new model. This is what declining marginal deployment cost looks like in practice, and it should lead to faster revenue growth than the capex-only narrative suggests.

2. We’re in the Mainframe Phase. PCs Are Coming.

We are in the mainframe computer phase of AI. Large, centralized systems — frontier models from OpenAI, Anthropic, Google — were necessary to focus development efforts and demonstrate what AI could do. That phase worked. Billions of people have now interacted with these systems. The capabilities are well understood.

But just as computing didn’t stay in the mainframe era, AI won’t stay centralized. We’re moving into the PC era: a more decentralized ecosystem where smaller, specialized models run closer to the work.

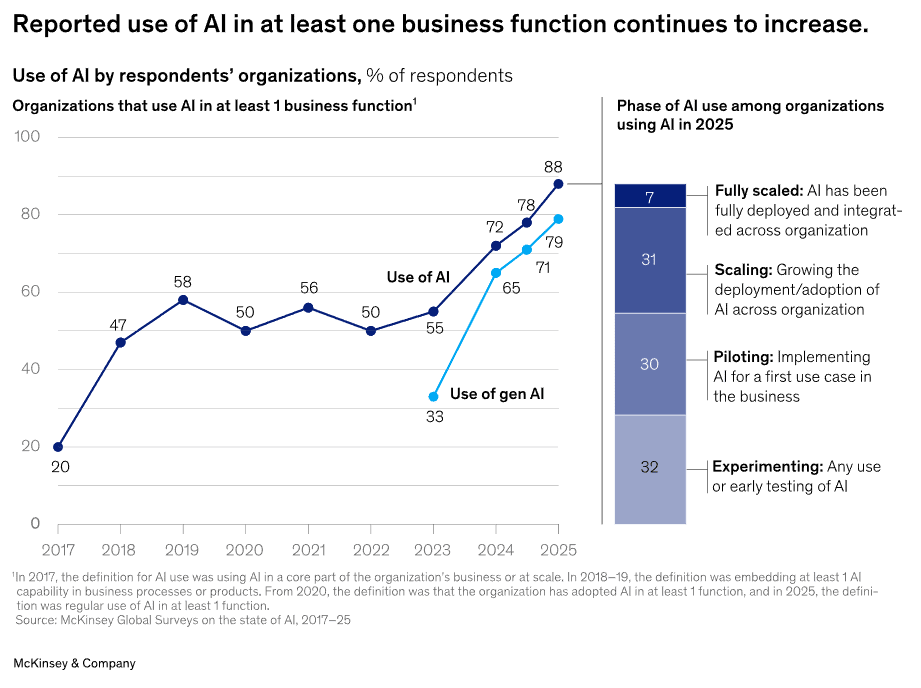

McKinsey’s 2025 Global AI Survey shows 88% of organizations now use AI in at least one business function, up from 20% in 2017, with two-thirds using it across multiple departments. The expansion is lateral — across HR, legal, marketing, ops, finance — not vertical into bigger models.

This is the mainframe-to-PC transition in real time. The money is moving from building the engines to deploying them everywhere. And just like the PC revolution, the real economic impact won’t come from the hardware manufacturers alone — it will come from the millions of applications built on top.

3. Late 2025 Hit a Quality Tipping Point

The quality of models as of late November 2025 hit a tipping point for many applied use cases. End-to-end workflows that were never before possible with AI are now achievable for meaningful categories of work. This isn’t incremental improvement — it’s a threshold crossing.

Coding became AI’s first killer use case, with $4 billion in enterprise spend and 50% of developers now using AI tools daily. But it’s spreading rapidly: customer service automation, document processing, compliance monitoring, sales intelligence, clinical documentation. Morgan Stanley noted that while early LLM use cases were limited to content generation and summarization, the biggest untapped opportunity is AI reasoning applied to enterprise data — context-aware recommendations, process optimization, and strategic planning.

This quality milestone is the difference between “interesting demo” and “production workflow that generates revenue.” And that distinction is everything for the bubble question. The capex was the investment. The quality tipping point is the moment that investment starts converting to returns. We’re there now.

4. The Tools for Decentralization Are Being Built

The tools to help speed up adoption and decentralize AI are actively in development. Three capabilities are converging that will fundamentally change the deployment economics:

Model switching and routing. The ability to dynamically route tasks to the right model — frontier when you need deep reasoning, lightweight when you don’t. You don’t need GPT-4-class intelligence to auto-categorize support tickets or extract fields from an invoice. Intelligent routing alone can cut inference costs by an order of magnitude for many enterprise workloads.

Open source maturity. Open-source models are closing the gap with proprietary alternatives far faster than most analysts expected. According to OpenRouter data, the number of inference providers grew from 27 to 90 in 2025, with popular open-source models now served by 20+ different providers. This creates real pricing competition and deployment flexibility.

Small Task Models. This is the big one. Purpose-built models for common, narrow, proprietary workflows. You don’t need a 400-billion-parameter frontier model for most business tasks. As a proof of concept, at Neurometric we trained a 4-billion-parameter model to beat frontier models on a specific CRM-related work task. That model is so cheap to run it’s nearly free. This is where the world is heading — and it’s the capability set Neurometric is building: the easy ability to switch between models, embrace open source where it makes sense, and create Small Task Models for the workflows that actually drive business value.

These three capabilities together create a deployment layer that is faster, cheaper, and more adaptable than anything the current “bubble” forecasts account for. I see it every day in our customer base as companies are adopting systems of models rather than one single giant model.

5. The GPU Depreciation Argument Ignores the Inference Revolution

The GPU depreciation argument — that today’s expensive chips will lose value before they pay for themselves — makes logical sense on the surface. But it assumes a relatively static state of the current chip ecosystem and ignores all the innovation happening around inference, which will become 80% of AI workloads within a few short years.

Consider what’s already in motion: NVIDIA’s acquisition of Groq will accelerate their broader push toward inference optimization. The custom inference chips developed by the hyperscalers — Google’s TPUs, Amazon’s Trainium, Microsoft’s Maia. And edge NPUs just coming to market in consumer and enterprise devices. Each of these represents a layer of AI capability and cost efficiency that makes current depreciation models look quaint. The chip landscape two years from now will look nothing like today, and it will make layers of AI possible that don’t seem possible now.

The Real Math: Frontier Models Will Be the Minority

AI inference is set to become one of the biggest markets in the history of the world. It will be trillions, possibly tens of trillions of dollars. My own projection is that roughly 25% of that will require frontier models — and yes, those frontier model labs will do fine. They’ll have a couple of trillion dollars in TAM to work with.

But 75% will run on open-source and small specialized task models. Training those is cheaper. Inference is even more so. Our CRM experiment proved this concretely: a 4B parameter model outperforming frontier on a specific business task, running at near-zero cost. Multiply that pattern across thousands of enterprise workflows, and you begin to see the real shape of the market.

All the economic predictions we make today about AI are based on the economics of training frontier models. They ignore the fact that these frontier models will be a minority of AI use cases as the industry matures.

Wall Street is looking at mainframes and trying to extrapolate what that means for computing’s future. They’re missing the fact that PCs are right around the corner — and PCs changed everything.

When you look out 5 years, The economics of AI won’t mirror the economics of today. If there’s a bubble, it’s a small one, and it will have a soft pop. The real story is what comes next.

Thanks for reading.